UBQT

Demo

Introduction

This program was developed in partial fulfilment for the degree of BSc (Hons) Computer Science at Dublin Institute of Technology. The full Dissertation can be downloaded below.

Around the time I needed to come up with an idea for my final year project I read Prey by Michael Crichton. It inspired me to make my project about an intelligent swarm of agents. Making them navigate a 2D environment seemed like a straightforward visually pleasing choice. The most complicated environment to navigate is a maze. I was curious how a swarm of agents would efficiently handle such a task. But first I needed to decide in which format mazes/maps will be input into my program. While a grid-based solution would have been perfectly adequate, I wanted something that offered more variety, agility, and challenge. Letting users simply load a bitmap image looked like an option that promised the most accessibility and creative freedom for users. This, however, created a new challenge. Bitmap images would need to be converted into something that is easier for a steering AI to perceive.

Image Manipulation

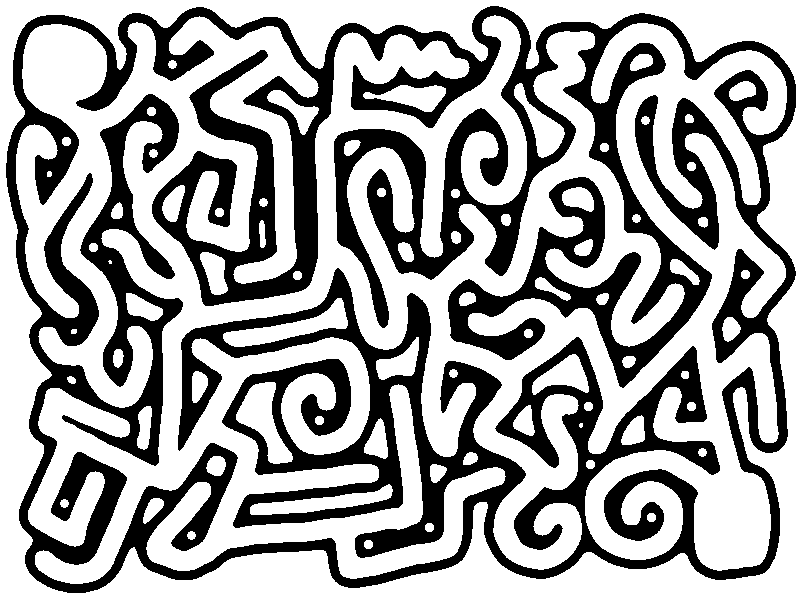

After the user chooses which image to load, it is then necessary to define which parts of the image are navigable and which are obstacles. The program provides several thresholding modes and a tolerance slider for this purpose. Next the program performs floodfill to remove any navigable space that cannot be reached from the starting location. Finally, edge extraction is performed which only leaves 1-pixel thick outline of reachable navigable space.

Seamstress Algorithm

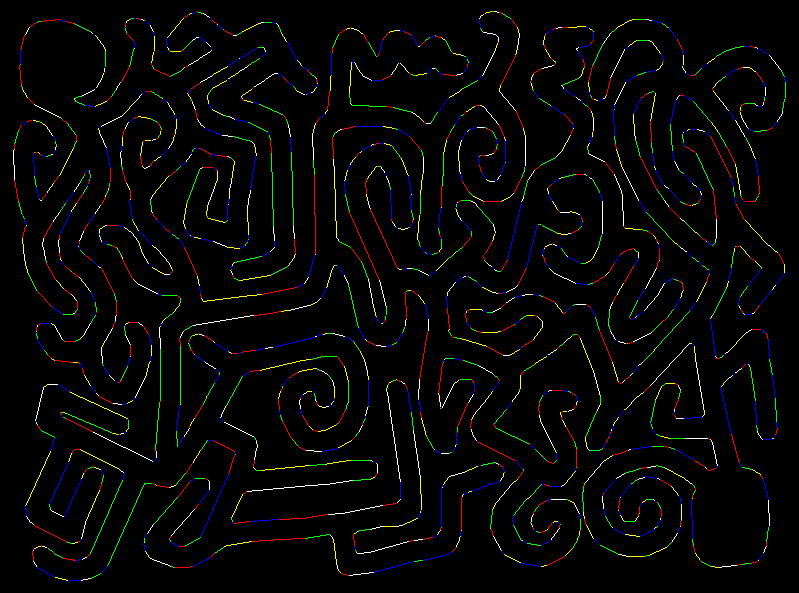

The environment will be fully generated after all the black pixels were replaced with lines. The idea of the algorithm is to identify patterns of pixels which altogether depict a straight line. Also, there is need to allow the algorithm to accept small variations in patterns so as to keep the number of generated lines at a reasonable level. For example, a 19 and a 20 pixel long segment can be treated as sufficiently similar, but not a 4 and a 5 pixel long segment.

To identify a pattern, a border segment is picked first. A segment is a sequence of black pixels, which come as a row or as a column without a single gap. Properties such as length and orientation (horizontal or vertical) are identified. After that its neighbouring segments are analysed. If a neighbour shares similar properties, they can be treated as a single line. The next step is to move in the direction of the picked neighbour and check if there are any more segments with similar qualities. This is done until a segment is reached that doesn’t share the same properties. At this point the line is added to the collection and the pixels that make up the line are removed. Here is a short step-by-step demonstration of the algorithm's logic (pdf).

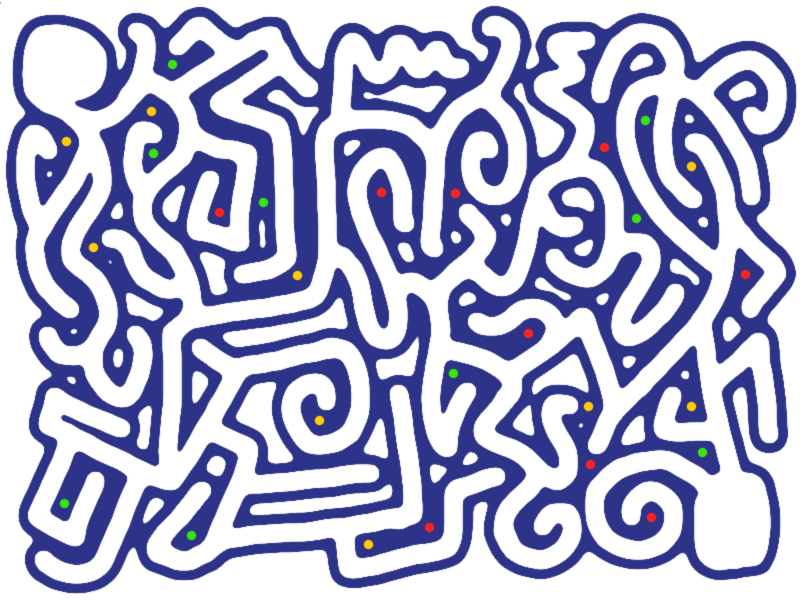

For example, if the following image was used as input:

And the user chose the following thresholding:

The output would be a collection of 2150 lines. The image below shows how these lines would be arranged. Alternating colours are used to depict neighbouring lines so that it is possible to see where one line ends and another begins.

Swarm

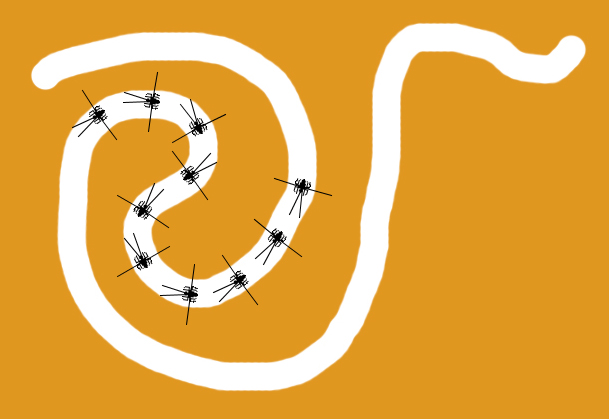

After all the obstacles were calculated a swarm of 10 agents is released at the selected starting location.

Each agent has two sensors in the front and two side sensors that are used for steering. Agents have constant speed and will move in the direction their vector is pointing. Each update cycle this vector is updated using input from sensors. The closer the intersection between the sensor and the wall to the agent, the more it affects the vector.

When an agent identifies a junction, it places a node in the middle of it with vectors pointing in the direction of each tunnel. Green vectors are not explored and will have the highest priority when an agent picks a direction. Yellow vectors are currently being explored. Agents will evenly split between yellow vectors at each junction. And red vectors are known to not lead to the destination. Eventually when the swarm finished exploring the environment all agents would have arrived at the destination and all nodes will have a yellow vector point in the direction of the destination.